Hi Maureen,

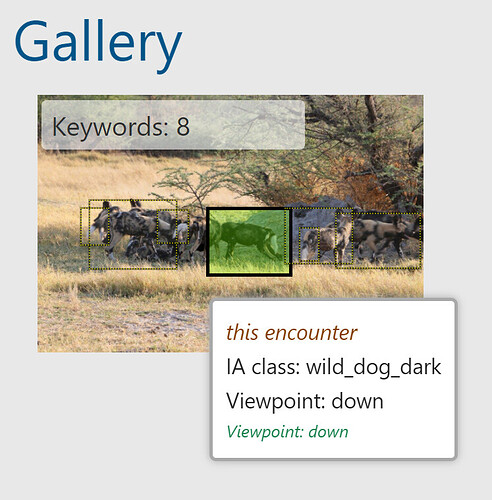

This was a detection prediction of “down” (rather than the expected prediction of “left”) on the viewpoint, causing it to match against what looks like a rogue’s gallery of other “down” and down-related (mis)predictions.

It may be that “down” is a viewpoint class with insufficient data. If we see a lot of this, then we may eventually need to retrain the model with additional “down” examples or rebalance the dataset to do better at this viewpoint versus others.

With the misprediction of down, the system then actually behaved as designed and filtered on related viewpoints (where they were actual “down” viewpoints or not).

The workaround is to delete the annotation and redraw it manually, setting the correct viewpoint. Let’s monitor for more of these.

Thanks,

Jason